Automate Google Reviews via Text | Staff Workflow on Azalea Health

💡 Azalea Health practices can automate Google review collection via text by connecting Curogram's post-visit workflow to their scheduling data. ...

7 min read

Aubreigh Lee Daculug

:

May 14, 2026

A new patient in your area opens Google. They type "best primary care near me." Two clinics show up side by side.

One has 612 reviews and a 4.8-star rating. The other has 34 reviews and a 3.9-star average. The patient taps the first one. They never even see your name.

That's the gap. Not in clinical skill. Not in patient outcomes. In digital visibility.

Meditab IMS is solid clinical software.It helps you chart, schedule, and bill. But it does not ask patients for reviews. It does not survey them after a visit. It does not route happy patients toward Google or unhappy ones toward private feedback.

Reputation lives outside the platform completely.

Most practices try to fix this by hand. The front desk asks at checkout. A staff member sends sporadic emails. A printed card shows up in the discharge folder.

These efforts fade fast. They depend on people remembering, on patients having time, on staff caring enough on a busy day. They almost never scale.

Meanwhile, the practice down the street has automated everything. Their reviews keep climbing. Yours stay frozen.

This is where automated Google review generation for Meditab IMS practices with post-visit surveys changes the math. Instead of asking your team to remember, the system runs in the background. Surveys go out after every visit.

Five-star feedback flows publicly. Negative feedback flows privately. Your team does nothing different.

The result feels almost unfair. One multi-location practice using Curogram grew from 993 to 8,159 Google reviews in 16 months. 90% of those were five-star.

This article walks you through how that engine works, why manual efforts keep falling short, and how to build a steady review pipeline without adding work to your front desk.

Meditab IMS does its clinical job well. Charting, scheduling, billing — all there. What it does not include is any form of reputation management medical practice IMS users actually need.

Look at the gap feature by feature, and the missing pieces stack up quickly:

So practices have no systematic way to turn satisfied patients into public advocates. The reviews that do appear are either rare and organic or the result of inconsistent staff effort.

Search your own practice name on Google. You might see 28 reviews and a 3.9-star average. Three of those reviews are negative. Maybe one was a billing dispute, one a long wait time, one from a patient who confused you with another office.

Those three reviews shape roughly 10% of your public identity. Meanwhile, the new dermatology office two miles down the road has 400 reviews and a 4.8-star average. They opened last year. You have practiced for fifteen.

Google does not reward tenure. It rewards reviews.

BrightLocal data shows that 87% of consumers read online reviews for local businesses.

Even more telling, 73% only pay attention to reviews written in the last month. A practice with stale reviews — even glowing ones from 2024 — quietly signals inactivity.

Fresh reviews signal a thriving practice. For every patient searching "best [specialty] near me," the practice with more recent, higher-rated reviews wins the click. The math is simple and unforgiving.

A 3.5-star Google rating does not reflect twenty years of clinical excellence.

But the physician feels powerless about it. You cannot force patients to leave reviews, asking at checkout feels awkward, and email blasts get ignored.

The gap between reputation and reality starts to feel permanent. It isn't.

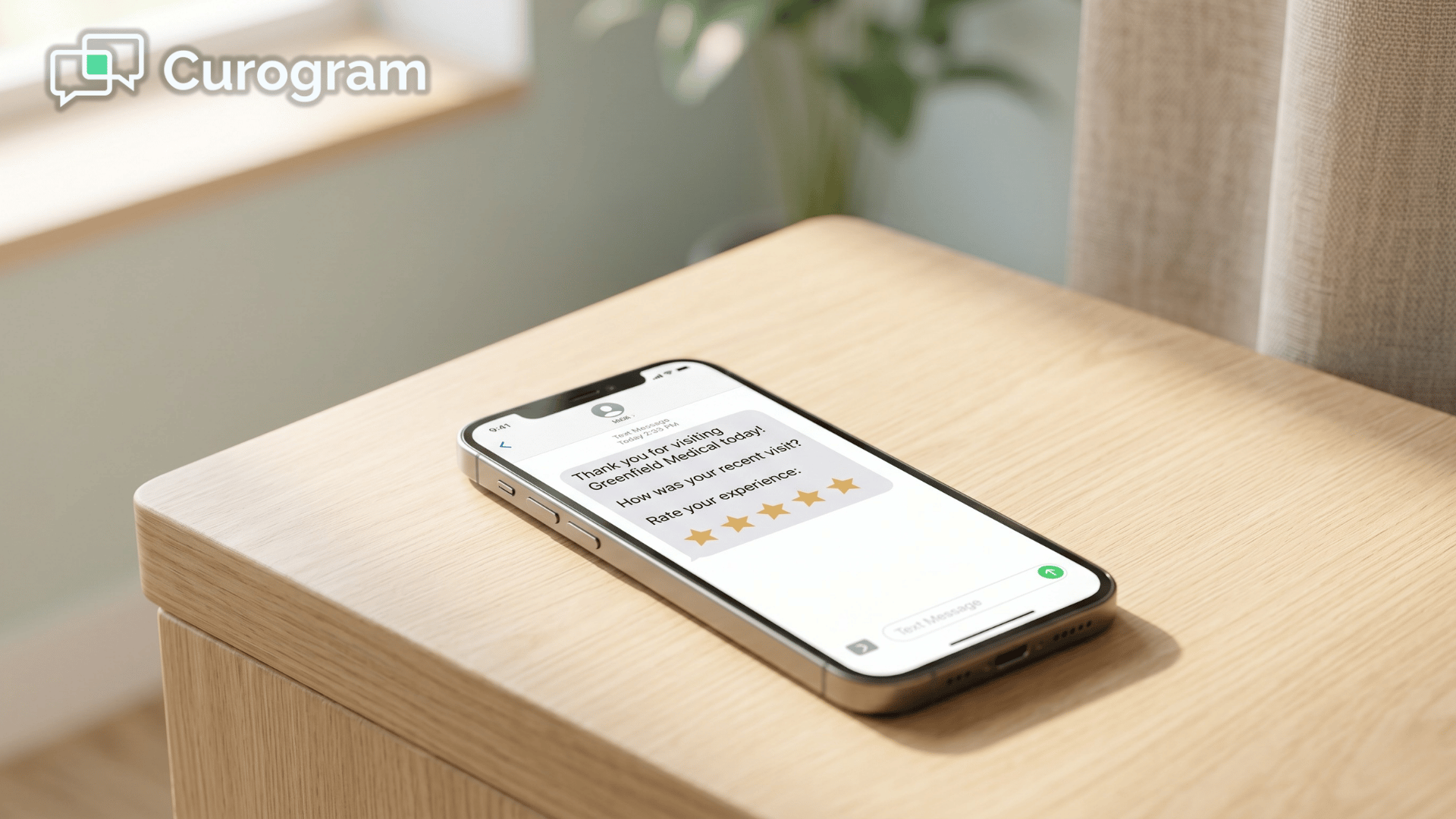

After every completed appointment in your IMS schedule, a short text survey goes out to the patient.

Patients who rate the visit highly get a one-tap link straight to your Google review page. Patients who rate it poorly land on a private feedback form your manager sees first.

Your staff do nothing. Reviews accumulate quietly in the background.

This is the core of Meditab IMS Google reviews automation:

Take the human asking out of the loop entirely.

The survey uses one simple satisfaction question. A high score sends the patient down the public path — the Google review link. A low score sends them down a private path where your team can respond before frustration becomes a public complaint.

This routing protects your public profile while still surfacing what needs to improve. There are no staff judgment calls and no manual sorting.

The post-visit survey Google review routing Meditab teams care about runs the same way every time, with no risk of a tired front-desk worker forgetting the script.

90% |

| Five-star share of all reviews generated through Curogram's automated routing in a 16-month case study |

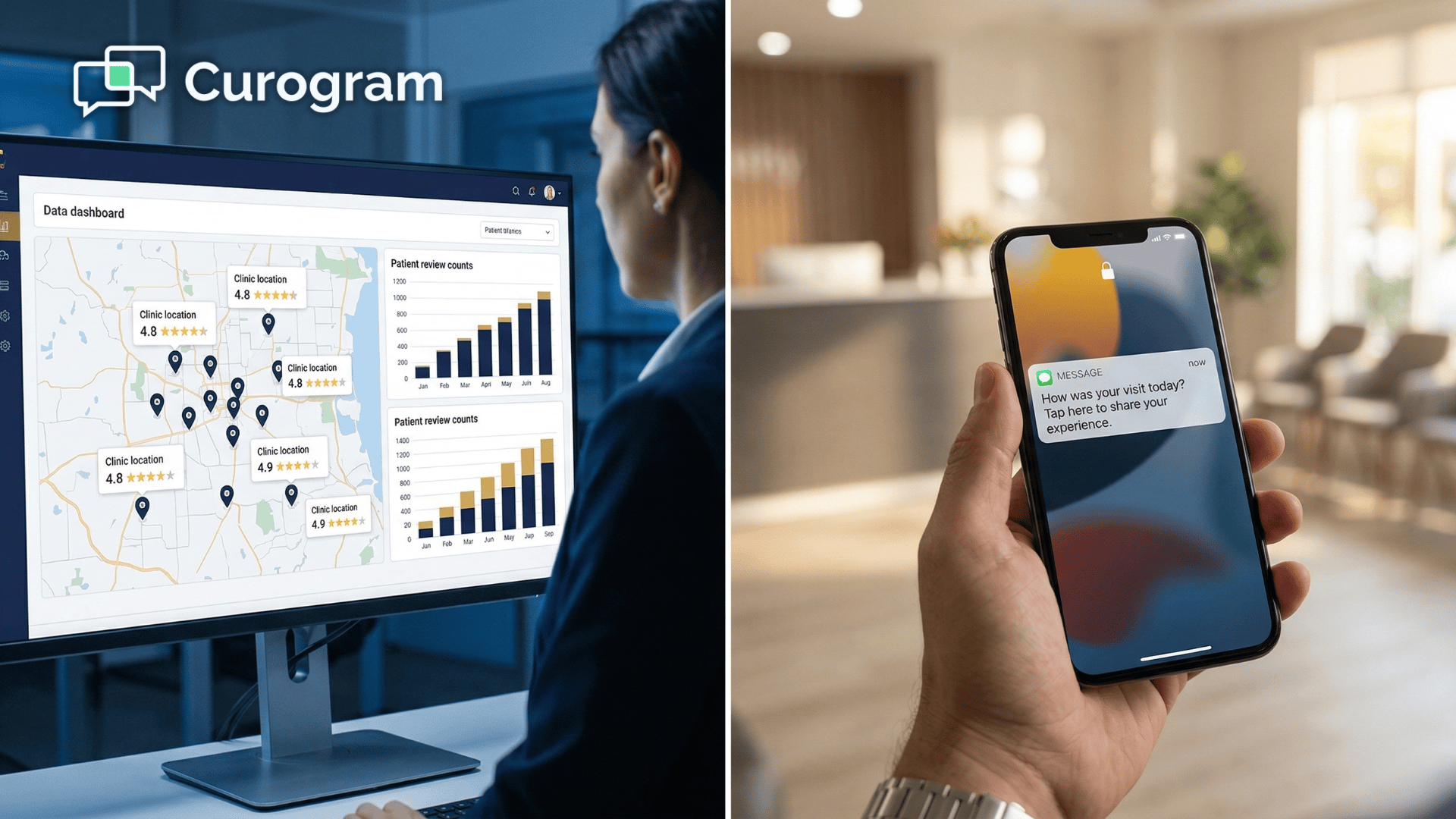

Curogram syncs with your IMS schedule and triggers surveys automatically.

No CSV exports, no manual sends, no list pulling at the end of the day. The system already knows who was seen and when.

Surveys typically fire one to two hours after the visit. That timing matters — the experience is fresh, the patient is still thinking about you, and the response rate is much higher than a survey that lands days later.

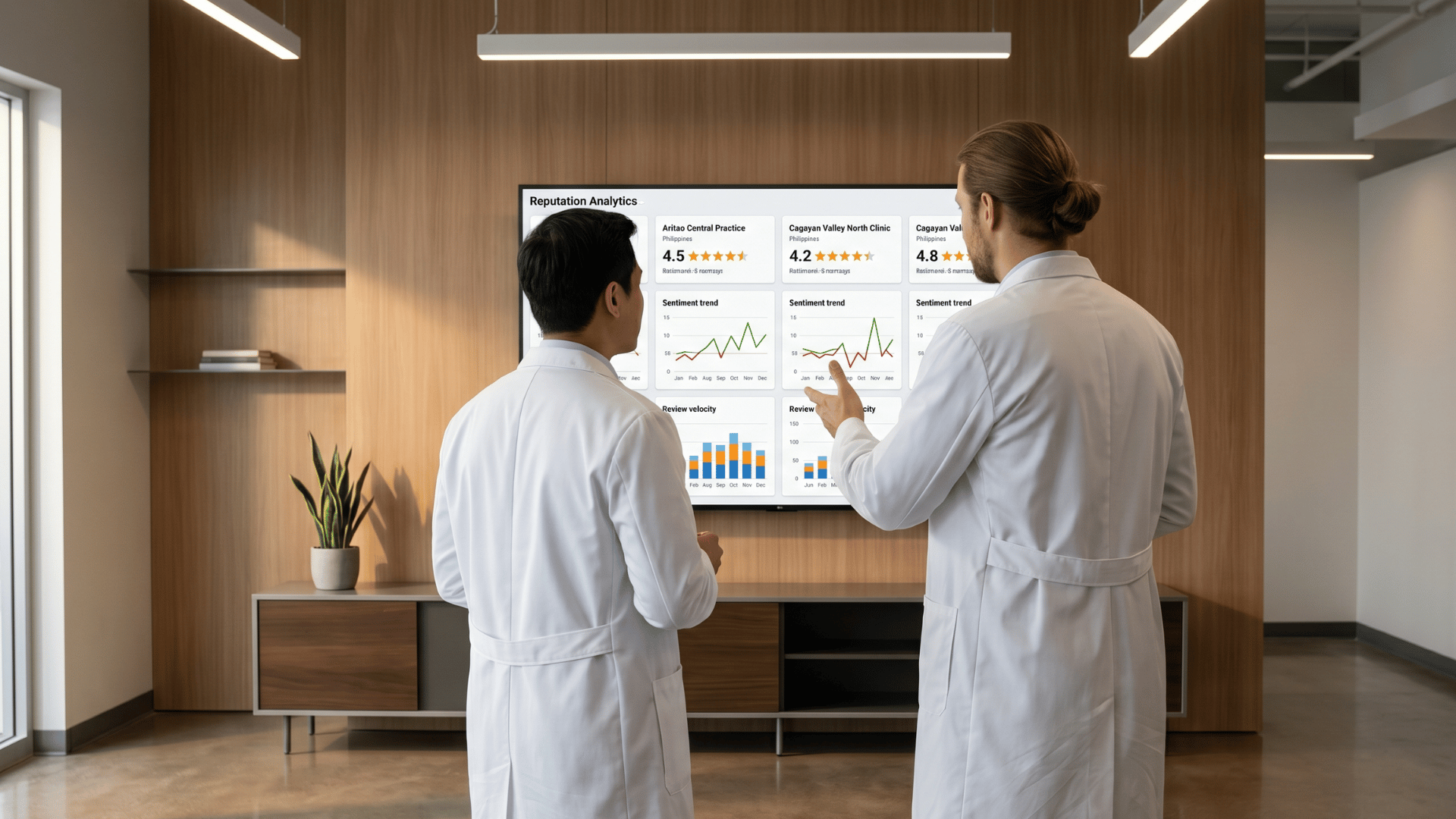

For groups running IMS across several sites, the dashboard pulls every Google profile into one place. Practice directors stop juggling tabs and start seeing the whole operation at once.

The view tracks the metrics that actually move the needle:

This is what turns reputation work from a guessing game into a measurable operation. The numbers are right there, week over week.

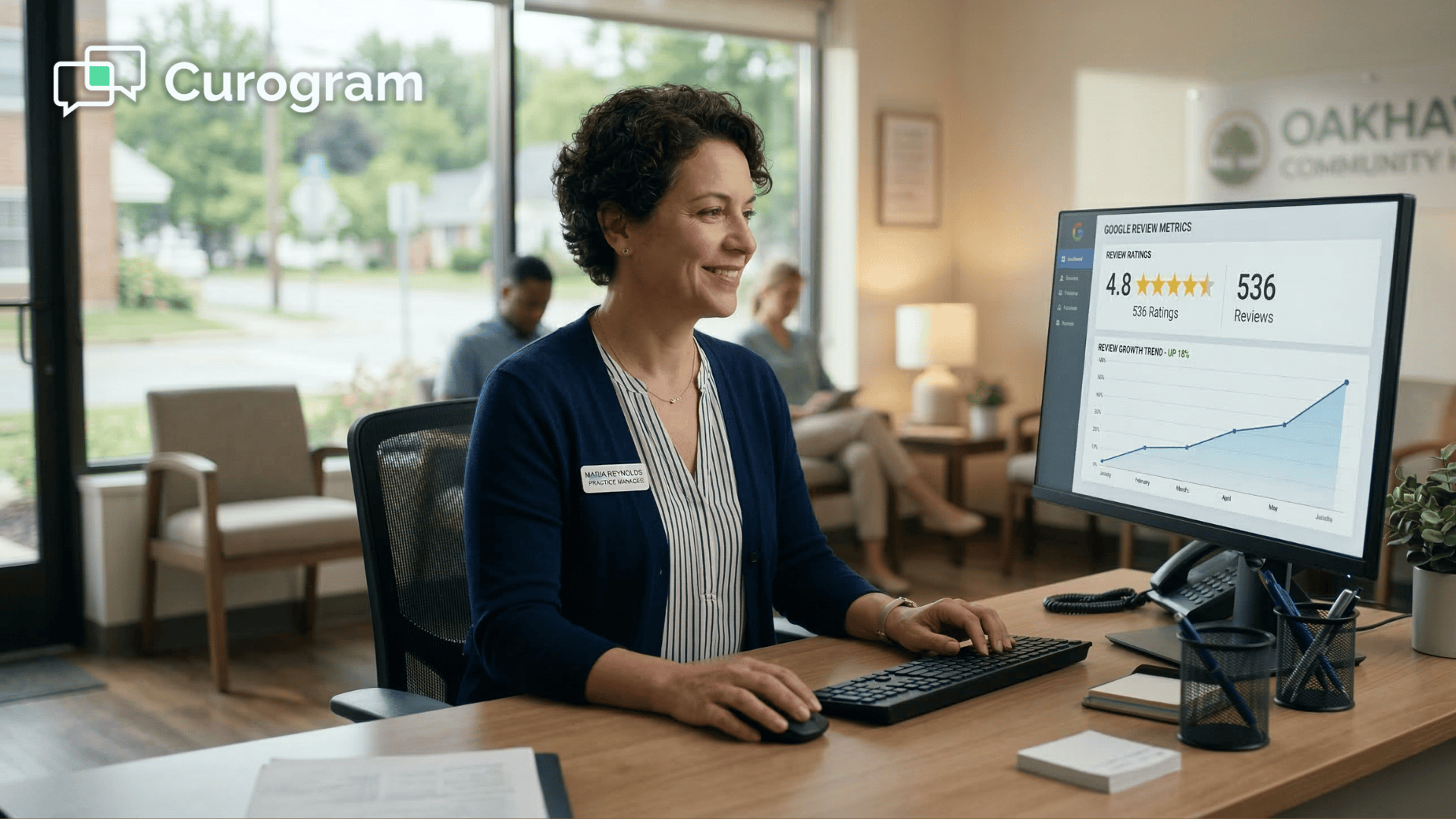

A multi-location practice using Curogram saw a transformation most teams would not believe was possible. The change was not gradual. It was complete.

8,159 Total Google reviews after 16 months, up from 993 at the start

That is not a marginal lift. It is a full rewrite of the practice's online identity. The volume alone creates a competitive moat that rivals cannot quickly match.

Reviews drive visibility. Visibility drives new patients. New patients drive more reviews. Once the system is running, that loop accelerates on its own.

7,166 New reviews added in 16 months after Curogram switched on, with no staff effort beyond initial setup

Your Google ranking improves. New patient inquiries climb. Each new visit is another potential five-star review feeding back into the engine.

This is how you actually increase Google rating Meditab IMS practice owners have been quietly chasing for years — by removing the human bottleneck entirely.

Imagine your starting point is 30 reviews and a 3.9-star average. Three months in, you are at 280 reviews. Your average has climbed to 4.7 because the volume of authentic five-star reviews has diluted the older negatives.

New patients start mentioning the reviews at check-in. "I picked you because of your Google ratings." Your front desk did not ask a single one of them.

Run the math for an average practice and the picture gets clearer:

For your team, this means one thing: you finally get more patient reviews Meditab practice operations have struggled to generate, without adding a single new task to the front desk.

Your patients already trust you in the exam room. The real question is whether the patients still searching for you online see that same trust reflected back to them.

Meditab IMS gives you everything you need to deliver excellent care, and almost nothing you need to advertise it digitally to the next wave of new patients.

That is the gap Curogram closes. Reputation management for a medical practice IMS users rely on does not have to mean awkward checkout asks or guilty staff reminders.

It can simply mean a survey that fires on its own, sentiment that routes itself, and reviews that grow week after week without anyone touching them.

Think about what this means for the math of your schedule. If your front desk sees 40 patients a day, even modest engagement turns into about 120 new reviews a month. Across a year, that is more than 1,400 new entries on your Google profile, the vast majority of them five-star.

That kind of volume does not just look good. It changes how Google ranks you. It changes how new patients feel when they line you up next to a competitor. It compounds, month after month, into a moat your rivals cannot quickly copy.

Schedule a Demo with Curogram and see how the post-visit survey, smart sentiment routing, and multi-location review dashboard plug into your existing IMS schedule.

Yes. Curogram's workflow sends a satisfaction survey first, not a direct review request. Patients who report a positive experience are then invited to share it on Google. This follows Google's guidelines on review gating — there are no incentives, and no patient is silenced if they want to leave a public review. The survey simply captures genuine sentiment, and only authentically satisfied patients are pointed toward Google.

Negative responses are routed to a private channel your practice manager monitors. Your team can review the issue, reach out, and try to resolve it directly. In many cases, this turns a frustrated patient into a recovered relationship — and sometimes even a positive one — well before they consider writing a public review.

Most practices see new reviews within the first week of launch. Velocity depends on patient volume, so a practice seeing 40 patients per day will pile up reviews faster than one seeing 10. Even smaller practices typically see 20 to 40 new reviews in the first month, which is usually enough to shift both the star average and the Google search ranking.

No. The workflow runs entirely in the background once it is connected to your IMS schedule. Staff do not need to send anything, sort anything, or remember anything. Most teams are fully trained on the dashboard in under 15 minutes.

Yes. The dashboard tracks review velocity, sentiment, and star rating per provider and per site. Practice directors get one consolidated view, which makes it easy to compare locations, identify lagging sites, and benchmark progress over time.

💡 Azalea Health practices can automate Google review collection via text by connecting Curogram's post-visit workflow to their scheduling data. ...

💡 Building a reputation management workflow Meditab IMS practice automated review requests removes the front desk from the process entirely. After...

💡 For large NextGen Enterprise networks, Google reviews remain untapped. Neither NextGen nor Luma sends automated post-visit review texts or...