Patient Reviews That Reflect Reality | RamSoft

💡 Positive patient reviews at imaging centers on Google ratings are skewed by a structural problem: satisfied patients leave without saying a word...

10 min read

Aubreigh Lee Daculug

:

April 16, 2026

Your imaging center runs on some of the most advanced clinical technology in radiology. RADPAIR reads studies faster. Your cloud PACS makes images accessible in seconds.

Your AI flags what human eyes might miss. You've invested in the infrastructure of clinical excellence.

And yet, your Google rating is 3.6 stars.

Think about that for a moment. A referring physician's office is deciding where to send their next MRI patient. They open Google. Your center comes up.

So does your competitor down the road — 4.7 stars, 800+ reviews, all glowing.

Yours?

47 reviews, and most of them are complaints about parking and billing. That physician sends the patient somewhere else. Not because your care is worse. Because your reputation doesn't reflect your quality.

This is the defining problem of RamSoft imaging center reputation management automated Google reviews.

Clinical excellence and online reputation are two completely separate things — and right now, most imaging centers are only winning at one of them.

The gap is not about the quality of your work. It's about the silence of your satisfied patients. The patient who came in anxious, got AI-assisted results within 24 hours, and left feeling relieved? They didn't review you. They went home, made dinner, and forgot.

Meanwhile, the patient who had a billing dispute left a 1-star review the same night. That review is now the loudest voice in the room.

This article breaks down exactly why that happens, why RamSoft's ecosystem — for all its power — doesn't solve it on its own, and how automated post-study review generation finally closes the loop.

The math is straightforward. The fix is simpler than you think. And the results, as you'll see, are not small.

RamSoft's ecosystem is genuinely impressive.

Consider what's already running inside a fully built-out RamSoft environment:

Every piece of this infrastructure is designed to raise the standard of diagnostic care. But here's what none of it does: generate a Google review.

Walk through the patient journey with a satisfied patient. She arrives for a mammogram. The study is processed efficiently. RADPAIR-assisted reads move faster than manual interpretation.

She receives results within 24 hours through Blume, with a clear, plain-language explanation powered by ChatGPT. Everything goes right. She leaves the center feeling relieved and cared for.

She never writes a review.

That's not a failure of your technology or your staff. It's simply human behavior. Happy patients assume their smooth experience is the norm.

They don't feel the urge to say anything because nothing felt remarkable enough to comment on — even though it was. They move on with their day.

Here's where the numbers start to sting.

A typical imaging center processes around 40 studies per day.

That's roughly 1,200 patients every month. Of those 1,200, only about 2 to 3 will leave an organic Google review without being asked. That's a 0.2% review rate.

And of that 0.2%, the majority are unhappy patients — the ones who felt ignored, had a billing dispute, or waited too long. They're motivated. Satisfied patients are not.

The result is a Google rating that looks like this:

| What's Real | What Google Shows |

|---|---|

| 1,197 satisfied patients per month | 2–3 reviews, mostly negative |

| 98% diagnostic accuracy (AI-assisted) | 3.6-star average from 47 reviews |

| Clean facility, professional staff | Complaints about parking, billing |

| Clinical excellence | Invisible |

Your competitor five miles away actively asks for reviews. They have 4.7 stars from 800+ reviews. Referring physicians see that before they ever look at your clinical credentials. Insurance networks listing multiple imaging options default to the higher-rated center.

Direct-choice patients — especially those choosing where to go for a mammogram or elective MRI — pick based on Google ratings. This is not speculation. It's how healthcare consumers actually behave today.

| "Your AI reads studies with extraordinary accuracy. Your Google rating says 3.6 stars because of two billing complaints and one comment about parking. Your clinical excellence is invisible." |

That's the real cost of doing nothing.

The problem isn't that your patients don't care about their experience. Most of them do. The problem is that no one in your current workflow ever asks them to share it.

And asking is not as simple as it sounds.

Your staff is focused on clinical throughput. After a study is complete, the priority is processing results, managing the next patient, and keeping the workflow moving.

Asking each patient individually to leave a Google review — at scale, consistently, across every study — is simply not realistic for a human team to maintain.

RamSoft's PowerServer platform and its partner apps are built for clinical workflow excellence. That's their job, and they do it well. But imaging center review generation isn't a gap these tools were designed to fill.

Blume deepens engagement with returning patients who download the app. But many radiology patients — especially first-time mammogram or one-time MRI patients — will never download it.

They visit once. They have a great experience. And then they disappear, forever unreached.

The organic review rate stays at 0.2%. The 1-star reviews keep accumulating. The referring physicians keep choosing the higher-rated center down the road.

It sounds like a small operational gap. It isn't. It's a revenue leak that compounds quietly, month after month, as your online rating drifts further from your actual quality.

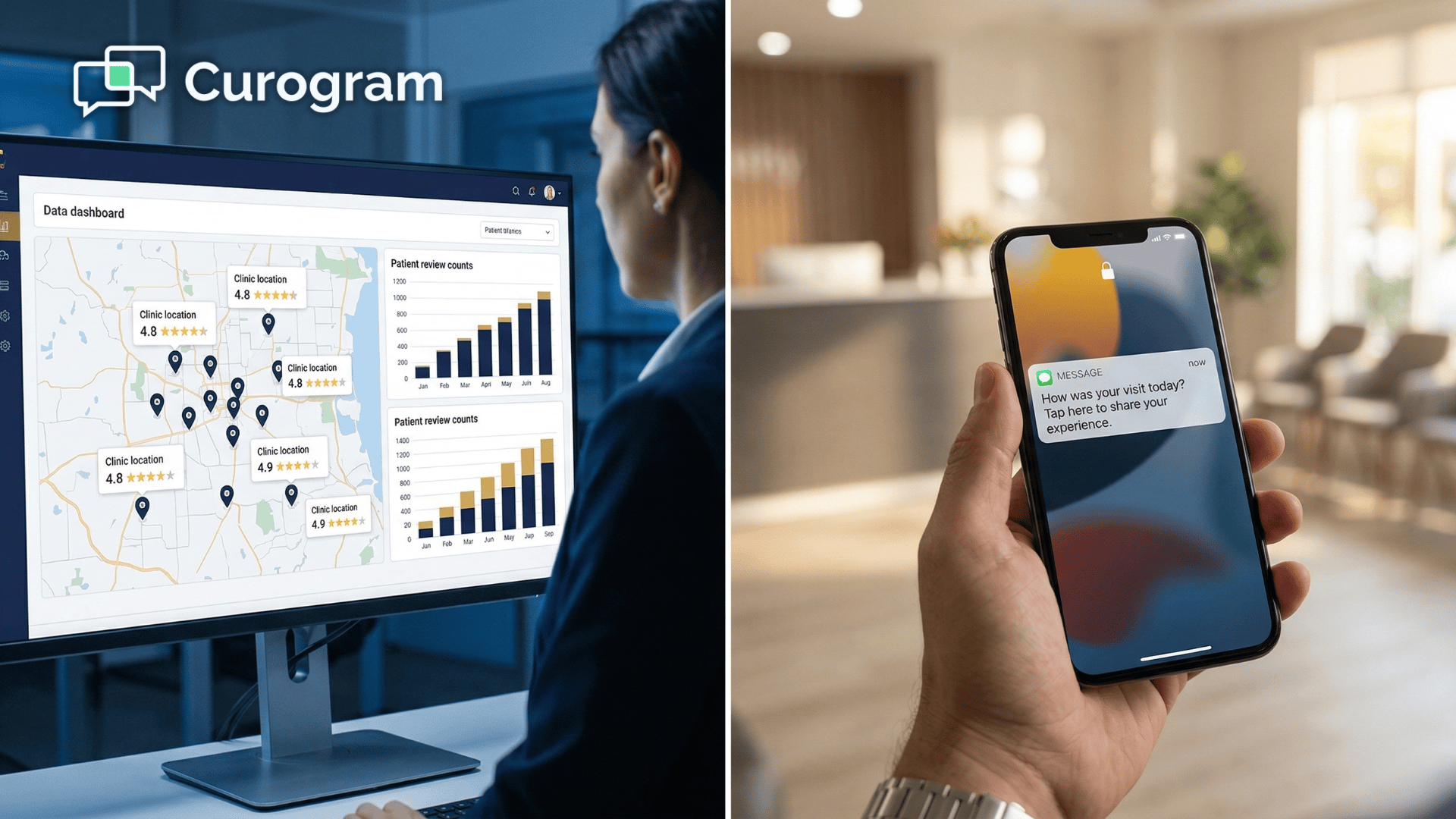

The solution to RamSoft patient reviews automation isn't a new portal, another app download, or a complicated workflow integration. It's a text message.

Immediately after a study is complete — or when results are delivered, depending on your workflow — the patient receives a short, personalized SMS.

Something like:

"Hi [Patient Name]. Thanks for choosing us for your MRI today. If your experience was positive, we'd love your Google review — it only takes 30 seconds. [Link]"

The patient taps the link. They leave a review. No login. No app. No friction.

It works because it meets patients where they already are:

On their phones, right when the experience is still fresh. That's the core of what Curogram delivers for RamSoft imaging centers — a text-based automation layer that runs parallel to your clinical workflow and converts satisfied patients into public advocates, without adding a single task to your staff's plate.

Not every patient will respond positively. That's fine — and actually useful.

Curogram's review automation uses smart sentiment routing.

When a patient indicates a positive experience, the review goes straight to Google.

When a patient signals frustration or a problem, the response is captured privately through an internal feedback form instead.

Your team gets an immediate alert. You can reach out, resolve the issue, and prevent a 1-star review from going public.

This is the difference between passive reputation management and active reputation management.

You're not just hoping Google shows your best side. You're actively shaping what goes public while using negative feedback constructively to improve your service.

Curogram's text-based review automation works alongside RamSoft's clinical infrastructure and Blume without any conflict.

The two systems serve distinct purposes:

Together, they cover ground that neither covers alone. Blume deepens the relationship with existing, engaged patients. Curogram converts the full patient population — one-time visitors included — into review-generating advocates.

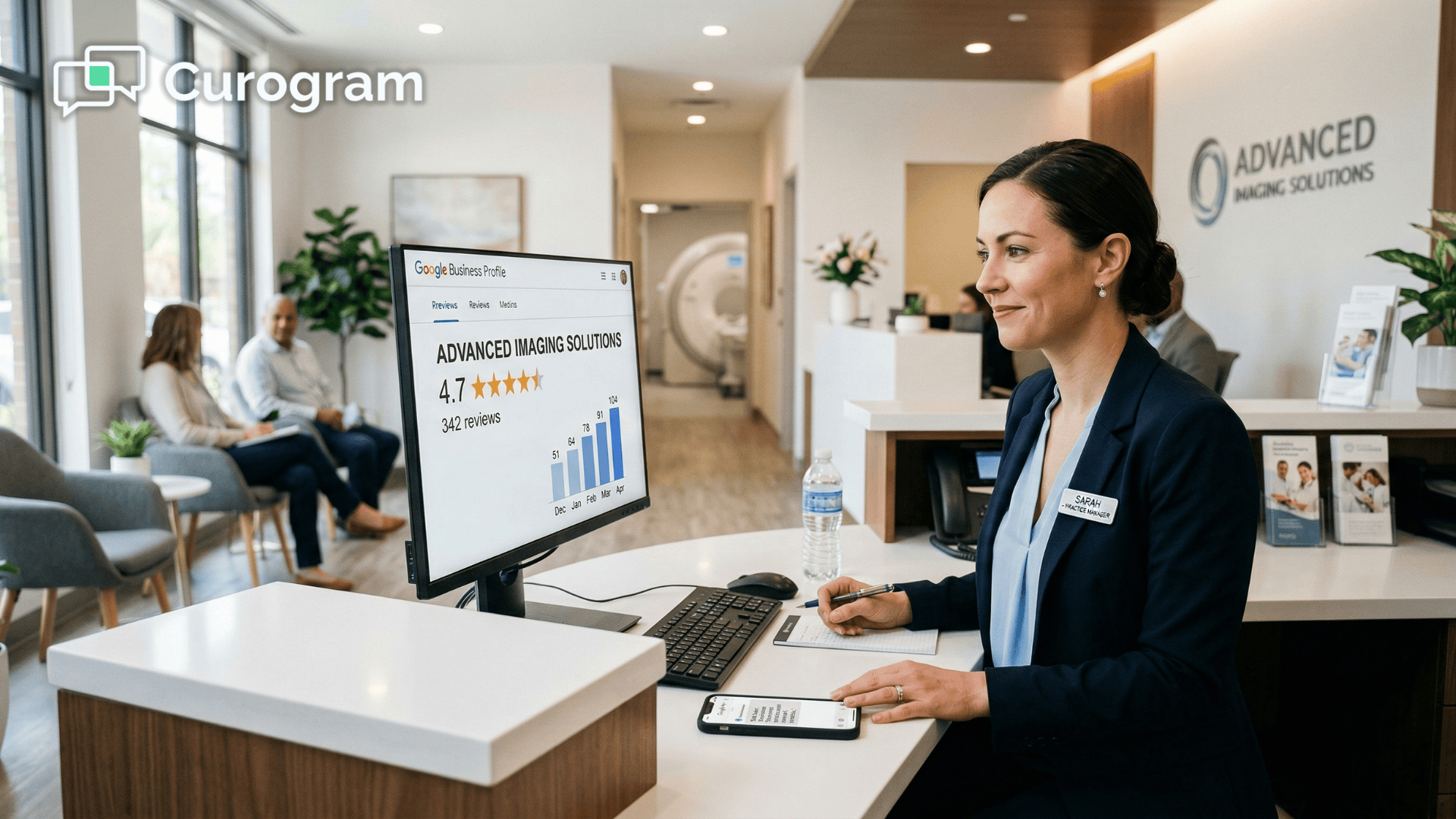

For multi-location imaging networks using PowerServer reputation tools and centralized management, Curogram also offers a network-wide dashboard.

You can see total review counts and ratings by location, monthly review volume, sentiment trends, and service recovery alerts — all from one screen. A VP of Operations managing 10 or 20 imaging centers can see exactly which locations have reputation gaps, which are performing well, and where to direct improvement efforts.

This is not a theoretical case.

A real multi-location imaging practice came to Curogram with 993 total Google reviews across all locations.

Their average rating was 3.6 stars — shaped almost entirely by the small fraction of patients who had negative experiences. Their clinical quality was strong. Their online reputation said otherwise.

After deploying automated post-study review texts through Curogram, here's what happened over 90 days:

| Metric | Before | After (90 Days) |

|---|---|---|

| Total Google Reviews | 993 | 8,159 |

| New Reviews Generated | — | 7,166 |

| New 5-Star Reviews | — | 1,064 |

| Average Star Rating | 3.6 | 4.7 |

Of the 7,166 new reviews generated, 15% were 5-star ratings. That's 1,064 patients who had excellent experiences and, for the first time, were given a simple way to say so.

Let's apply this to a single imaging center doing 40 studies per day.

Starting with a monthly patient volume of 1,200, the contrast between doing nothing and deploying automation is stark:

Organic reviews top out at 2 or 3 per month at a 0.2% rate, while a conservative 10% conversion through automated texts brings in 120 new reviews every month — roughly 1,440 per year.

Over time, that's the difference between a 3.6-star profile and a 4.7-star profile. And a 4.7-star profile is not just a number that looks better. It changes real decisions.

Referring physicians don't choose imaging partners based on clinical white papers. They scan Google, ask colleagues, and go with the center that looks trustworthy.

A radiology Google rating improvement from 3.6 to 4.7 is not cosmetic — it directly influences referral volume.

Consider this:

If a 4.7-star rating captures just 2 additional self-referred patients per month — patients choosing your center for MRI, mammography, or ultrasound — at an average study value of $400, that's $800 per month in new revenue.

Over a year, that's $9,600 from two patients per month. Scale that across a 10-location network, and the number becomes meaningful very quickly.

This means radiology online reputation is not a marketing nice-to-have. It's an operational and revenue driver.

Most imaging centers today have what you could call a passive reputation. Their Google profile reflects only the patients who were motivated enough to leave a review on their own — and those are almost always the unhappy ones.

The other 99.8% of patients, the ones who had great experiences, are simply silent.

Automated review generation flips this. Your Google profile shifts from a complaint box to an accurate reflection of your actual patient population.

The 1,197 satisfied patients start speaking. The 3 unhappy ones don't disappear, but they're no longer the only voices in the room.

Once your online reputation catches up to your clinical standards, several things shift — not gradually, but as a direct result of the rating change.

Referring physicians see a 4.7-star center and send patients confidently, no longer second-guessing. Insurance networks listing multiple imaging options give your location prominence. Direct-choice patients pick you over the competitor they found first on Google.

That's the outcome of getting imaging center review generation right. Your clinical excellence — the accuracy, the speed, the AI-powered infrastructure — finally has the public proof to back it up.

Your clinical operation is already performing at a high level. The patients who walk out of your imaging center satisfied — and there are hundreds of them every month — are your strongest marketing asset.

Right now, most of them are leaving without saying a word.

RamSoft imaging center reputation management automated Google reviews is not a complicated initiative. It doesn't require a new system, new staff training, or changes to your clinical workflow.

It requires a text message sent at the right moment, with smart routing that protects your reputation while capturing actionable feedback.

One practice went from 993 reviews to 8,159 in 90 days. That's not a coincidence.

That's what happens when a system that was always delivering excellent care finally starts telling people about it.

Curogram works alongside your existing RamSoft infrastructure — including Blume, PowerServer, and your scheduling and intake workflows — to add the one layer that's missing: a direct, automated pathway from completed study to published Google review.

It reaches every patient, including the first-time visitors who will never engage with another portal or app. It runs in the background, costs your staff zero additional effort, and compounds over time into a Google profile that actually reflects your quality.

For multi-location networks, the case is even clearer. Centralized reputation dashboards let leadership see exactly where each location stands, track progress month by month, and identify service improvement opportunities — all from one place.

Your diagnostic quality is already there. Now it's time to make it visible.

Schedule a Demo and see how Curogram turns every completed study into an opportunity to build the reputation your imaging center has already earned.

Yes. HIPAA allows healthcare providers to send marketing and communications — including review requests — to patients who have provided consent. Standard practice is to obtain that consent at intake or first visit, which most imaging centers already do as part of their intake process. Curogram's text-based automation integrates with your RamSoft workflow and consent management so that every review request is sent only to patients who have opted in. The reviews themselves — once published to Google — contain no protected health information (PHI). Patients share their names and star ratings. Nothing clinical or identifiable is included in what goes public.

Automated review requests are completely legitimate, provided no incentive is offered. Google's guidelines specifically prohibit incentivized reviews — for example, offering a discount in exchange for a rating. Requesting a review with no strings attached is not only allowed, it's recommended. Google's own Business Profile documentation encourages businesses to ask satisfied customers for reviews. Healthcare review platforms like Healthgrades and Zocdoc use this exact model. What Curogram automates is the asking — the reviews themselves are genuine responses from real patients. Google has no issue with this practice when done correctly.

Yes. Curogram's enterprise dashboard is built for multi-location imaging networks. From a single interface, your leadership team can see the total review count and star rating for each location, monthly review volume across the network, sentiment trends, and service recovery alerts whenever negative feedback is flagged before it goes public. Each location maintains its own Google Business Profile. What Curogram adds is a centralized management layer so that network-level leaders can identify which locations have reputation gaps, which are performing well, and where to prioritize service improvements. You don't need to log into 20 different accounts to stay on top of your reputation.

Most imaging centers start seeing new reviews within the first few days of deployment — sometimes within hours of the first texts going out. Volume builds steadily from there as more studies are completed and more texts are sent. The 993-to-8,159 case study unfolded over 90 days, but meaningful rating movement typically begins within the first 30. The speed depends on your daily study volume and your existing review baseline. A center doing 40 studies a day will accumulate reviews faster than one doing 15. What stays consistent across all cases is the direction: up.

Yes, and this is actually one of the strongest arguments for text-based review requests over app-based approaches. SMS requires no download, no account, no password. A patient taps a link and lands directly on your Google review form. That's it. Text message open rates in healthcare consistently run above 90%, compared to email open rates that often fall below 20%. Older patients who would never download Blume or navigate a patient portal can still respond to a simple text. The friction is as low as it gets, which is precisely why conversion rates hold even across mixed patient demographics.

💡 Positive patient reviews at imaging centers on Google ratings are skewed by a structural problem: satisfied patients leave without saying a word...

💡 For large NextGen Enterprise networks, Google reviews remain untapped. Neither NextGen nor Luma sends automated post-visit review texts or...

💡 Imaging centers face unique trust issues. A single bad review can damage referral flow and hurt revenue across multi-site networks.Reputation...