NextGen Review Automation | Network-Wide Google Reputation at Scale

💡 For large NextGen Enterprise networks, Google reviews remain untapped. Neither NextGen nor Luma sends automated post-visit review texts or...

11 min read

Aubreigh Lee Daculug

:

May 1, 2026

Table of Contents

Picture this. A patient in your service area opens Google, types in "cardiologist near me," and sees three options. Yours has 47 reviews and a 4.2-star average. The competitor down the street has 612 reviews and a 4.7-star average.

Who do you think gets the appointment?

For most multi-location health systems running on Oracle Health, this scene plays out hundreds of times a day. Every clinic, every specialty, every city.

And most of those losses are invisible. Patients never call. They never explain why. They just choose someone else.

The frustrating part? It is not a clinical quality problem. Your providers are excellent. Your outcomes are strong.

But your Google Business Profiles do not reflect that, because review collection has been left to chance, scattered across 12, 50, or 200 locations with no real system behind it.

Here is the deeper issue. About 90% of new patients now research a provider's Google profile before scheduling.

That means your reputation on Google is doing more patient acquisition work than your website, your paid ads, and your referral relationships combined. And if you are managing a large network on Oracle Health Cerner Millennium, every disconnected workflow makes the gap wider.

It sounds simple to fix. It isn't.

You need consistent review generation across every site. You need fast, HIPAA-compliant responses. You need sentiment data that flows back into operational decisions.

And you need all of that without overwhelming your front desk teams or creating new compliance risk.

This guide walks medical practices through exactly how to build that system.

You will see what works at scale, where most networks fail, and how Oracle Health Google review management multi-location workflows can move you from reactive to proactive in 90 days or less.

For an Oracle Health network, Google Reviews are not a vanity metric. They are the front door to your entire patient acquisition engine.

Before a single appointment is booked, your reputation has already done most of the convincing. The gap between practices that manage this well and those that don't is widening every quarter, and at the multi-location level, that gap compounds across every site.

Roughly 90% of new patients check a provider's Google Business Profile before scheduling. In a study tracking patient behavior across 847 practices using Oracle Health, that same number showed up consistently.

Most new-patient inquiries originated from Google search and Business Profile visibility.

That means Google has quietly become the highest-traffic step in your funnel. It outranks your website, your paid ads, and even your referral relationships in initial touchpoints. For your team, this is the first impression you cannot afford to lose.

Volume and rating do not just look good. They convert. Multi-location systems running 50+ sites have seen a 34% lift in new-patient conversion when locations carry 200+ reviews and a 4.7+ star rating, compared to those under 50 reviews or below 4.2 stars.

In practice, the patient brain treats review count like a proxy for clinical reliability.

A provider with 600 reviews feels safer than one with 30, even if the clinical quality is identical.

A single one-star review with a detailed complaint can suppress click-through on your Google Business Profile by 8 to 12% for two to four months.

Even if you collect 20 new five-star reviews during that window, the damage lingers, consistent with findings on the effects of negative online reviews on patient choice and healthcare utilization

For a busy urgent care location, that drop can mean 40 to 80 missed inquiries a month. It compounds quickly across a network. This is why proactive review generation and fast response protocols are not optional for enterprise practices.

Most Oracle Health systems sit on top of patient communication data that is perfectly positioned to drive review generation. The problem is that the data and the review workflow rarely talk to each other.

When they do, the lift is significant, and the work that used to require a separate marketing tech stack starts happening inside the systems your team already uses every day.

Oracle Health's patient communication module integrates directly with review collection workflows. Through the Cerner Millennium environment, practices can automate review requests across the patient portal, SMS, and post-appointment email.

This closed-loop approach is the difference maker. Review requests reach patients at peak satisfaction moments, right after a successful visit, screening, or preventive care touchpoint.

That timing alone moves response rates more than any clever messaging.

When you connect aggregated review data to a reputation dashboard inside the Oracle Health ecosystem, the patterns become hard to ignore.

The recurring themes that surface across hundreds of reviews tend to fall into a few buckets:

This is not just marketing data. It is operational intelligence, reflecting the growing role of healthcare sentiment analysis and patient feedback analytics in quality improvement systems.

Every review collection, monitoring, and response workflow inside the Oracle Health ecosystem can comply with HIPAA's Minimum Necessary Standard.

No PHI is captured, stored, or exposed in public review responses.

That matters because most reputation tools were not built for healthcare. With healthcare-grade integration, your compliance, marketing, and clinical operations teams stop pulling in three different directions.

Single-location reputation management is hard. Multi-location reputation management at enterprise scale is a different problem entirely.

The shift from one Google Business Profile to fifty changes the game from tactical to operational, and the systems that worked at the practice level start to break under network-wide volume.

A 1,200-bed health system in the Southeast managing cardiology, orthopedics, primary care, and urgent care moved from scattered review monitoring to a single Oracle Health-integrated dashboard. Response time dropped from 7 to 14 days down to 2 to 3 hours.

Within six months, the network's average star rating improved by 23%.

The change was not about adding more staff. It was about giving existing staff one place to see everything, prioritize what matters, and act fast.

Enterprise practices need rules, not improvisation.

A tiered response model works well across multi-location networks:

| Review Type | Response Path | Approval Level |

|---|---|---|

| 5-star reviews | Public thank-you, clinical recognition | Site staff |

| 4-star reviews | Private outreach, targeted improvement offer | Site manager |

| 1-3 star reviews | Quality assurance review, director approval | Director or above |

For your team, this prevents the worst-case scenario:

A defensive, off-brand response to a sensitive review that ends up screenshotted on social media.

It also keeps clinical leadership in the loop on anything that touches safety or legal exposure.

COOs and CMOs increasingly use review data to identify specialty-level performance gaps and facility-level infrastructure issues.

A pediatric practice in California analyzed feedback and found that 40% of negative reviews mentioned appointment availability.

After a staffing reallocation, patient satisfaction scores rose by 18% in 90 days. The reviews did not just measure the problem. They funded the solution.

Most networks know they need more reviews. The hard part is generating them consistently, across every location, without burning out staff or creating compliance issues.

The fix is workflow design, not effort. Once the right pieces are connected to your Oracle Health environment, review volume becomes a predictable output instead of a project.

The single biggest variable in review response rate is timing. When a provider completes clinical documentation in Oracle Health, an automated workflow can fire a review request within 2 hours of visit completion.

Response rates improve by 22 to 31% inside that 2-hour window. They drop by 60% if the request waits longer than 48 hours.

The lesson:

Speed is not optional, and only automation can deliver it consistently across a large network.

Generic messaging underperforms. A cardiology practice asking about "your experience with our cardiology team" earns more responses than a generic "leave us a review" prompt. An urgent care center emphasizing speed and convenience does the same.

Tailored messaging lifts completion rates by 12 to 18% over generic requests. Across a 50-location network, that difference adds up to thousands of extra reviews a year.

A health system with 400,000 active patients found a clear split in how patients want to be contacted for feedback:

By distributing requests across all three channels in the first 90 minutes after a visit, the practice raised review capture from 18% to 31%.

For a patient panel of 100,000 visits a year, that is the difference between 18,000 reviews and 31,000. Not a minor lift. A different competitive position.

Review generation gets risky fast when teams cut corners on incentives or compliance. Healthcare has stricter rules than most industries, and the penalties for getting it wrong land squarely on the practice.

The good news is that there is plenty of upside available without ever crossing those lines, as long as your team understands where they are.

Direct payment for reviews is off the table. So is conditioning rewards on a positive rating.

What is allowed:

Open drawings that are not tied to review content.

A practice offering a 1-in-500 drawing for a $25 gift card increased review volume by 45% while staying inside Google's and CMS guidelines.

For your team, the rule of thumb is simple. You can incentivize the act of leaving feedback. You cannot incentivize the content of that feedback.

When provider compensation includes a reputation metric, behavior changes fast.

One surgery center applied a 5% bonus multiplier for departments holding a 4.7+ star average with 150+ reviews. Average ratings climbed from 4.3 to 4.8 stars in six months.

The shift was not about pressure. It was about visibility. Once providers saw their reputation as part of their professional scorecard, they engaged with patient feedback like they engage with clinical outcomes.

A 15-location health system named a "Top-Reviewed Practice of the Month" in internal newsletters and town halls. System-wide ratings rose from 4.4 to 4.7 stars in nine months. Providers started bringing review feedback into team meetings on their own.

Sometimes the cheapest reputation tool is a leaderboard.

How you respond matters as much as how many reviews you collect.

A great response strategy turns a negative review into a recovery moment. A weak one amplifies the damage.

At the network level, the difference between these two outcomes usually comes down to whether your team has a system or just good intentions.

Most networks need 8 to 12 response templates covering the most common review scenarios:

Templates are then customized with location names, provider names, and department details during implementation.

The result is a response composition time of 2 to 3 minutes per review, while the message still reads like a real human wrote it.

Across 320 practices studied, 38% of patients who left one-star reviews and received a personalized, empathetic response (not defensive) eventually updated their review to a higher rating.

A pediatric practice in Ohio used this protocol and converted 12 one-star reviews to 4 to 5 stars within 60 days. Their average rose from 4.1 to 4.6 stars.

In practice, the formula is the same every time:

Acknowledge, apologize for the experience, offer a private channel, never argue.

When a review mentions safety concerns, clinical errors, or possible legal exposure, it should automatically escalate to the practice director, medical director, and quality officer before any public response goes out. This governance prevents off-the-cuff responses that create new exposure.

For Oracle Health-connected workflows, this escalation can be built directly into the response queue. No one publishes blind.

This is what enterprise reputation management looks like when the system is built correctly. The numbers are not theoretical.

They came from a real 12-location health system facing a real crisis, and the playbook is repeatable for any Oracle Health network in a similar position.

The network ran primary care, cardiology, and orthopedics across 12 locations. Combined review volume across all 12 Google Business Profiles sat at 993 reviews, roughly 83 per location. The average star rating was 4.2.

That sounds acceptable, until you look at the competition. Three competing networks in the same geographic market averaged 4.6 to 4.8 stars.

New-patient volume had dropped 8% year-over-year despite more marketing spend. Exit surveys showed 72% of lost inquiries cited "low review volume" or "insufficient reputation information."

+721% Increase in total reviews across 12 sites — from 993 to 8,159 in 90 days.

Translation: every dollar of marketing was being undercut by a reputation gap, and the volume problem was the most visible symptom of it.

The system implemented an Oracle Health-integrated reputation management platform with four moving parts:

Nothing exotic here. Just consistent execution at scale, plugged into workflows the team was already running.

The volume gain was distributed unevenly across the network. The strongest performer — a single cardiology site — drove a disproportionate share of the new review flow, which is exactly what you want to see when provider engagement is the variable.

+1,920% Top-performing cardiology site went from 34 reviews to 687 in 90 days.

Lower-performing locations still moved, just at smaller multiples. The variance itself became useful data, pointing leadership toward sites where provider buy-in or post-visit workflow consistency needed attention.

Reputation lifts only matter if they show up in the patient acquisition funnel. In months 2 and 3 of the intervention, that signal arrived clearly.

+34% New-patient inquiry volume rose in months 2 and 3, after a year of stagnation.

Sentiment analysis on the new reviews showed that 87% of patients mentioned improved wait times, clinical expertise, or staff friendliness. Those are the same themes the messaging campaign emphasized. The system did not just generate reviews. It steered the narrative.

For your team, the takeaway is simple.

A 721% increase in review volume is not a marketing story. It is the result of process, automation, and consistent leadership signals.

If your Oracle Health network is running reviews the way most large systems do, with scattered ownership and inconsistent timing, you are leaving real patient volume on the table.

Every week without a connected workflow is another week of new-patient inquiries quietly going to a competitor with a stronger Google profile.

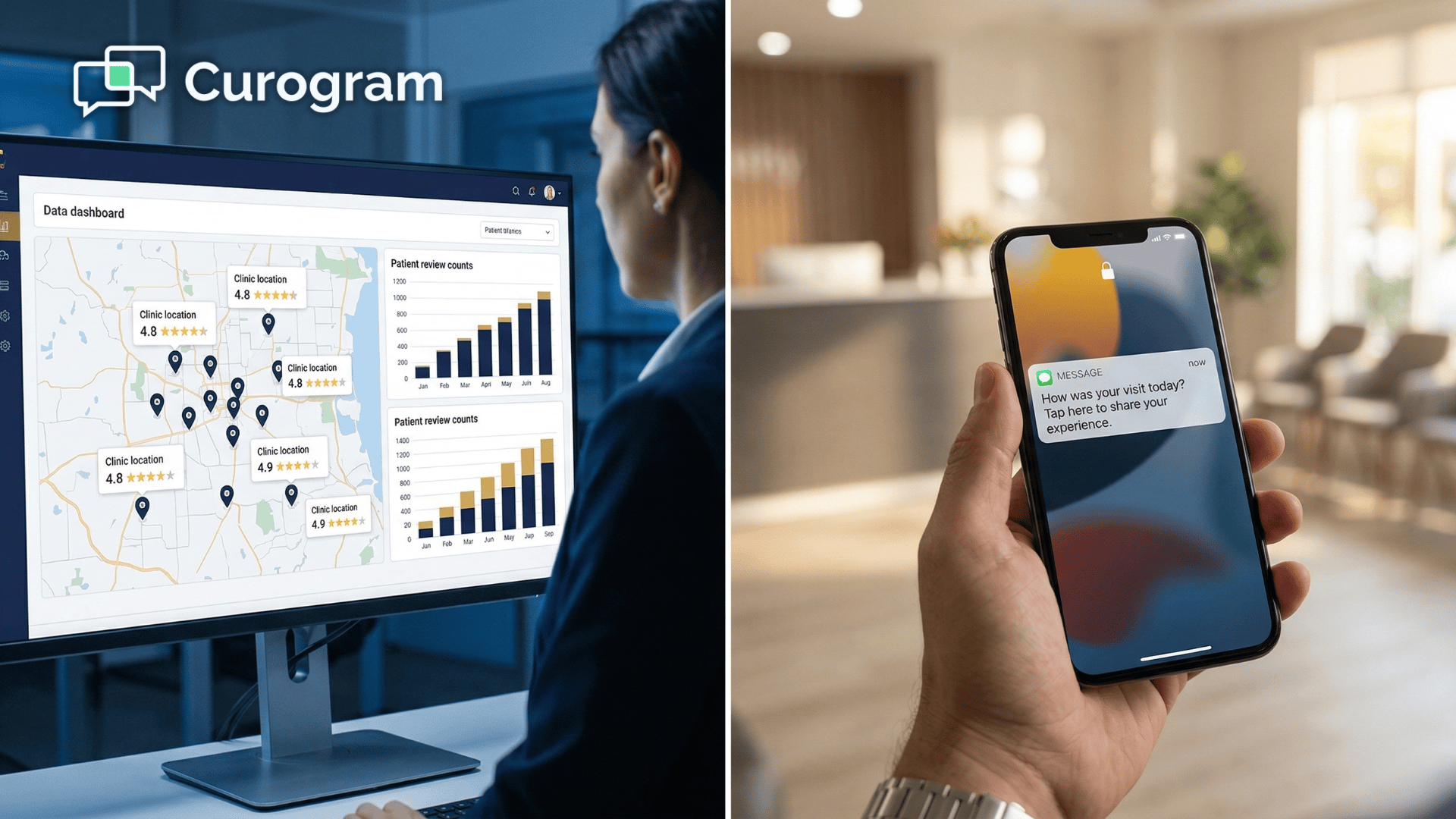

Curogram closes that gap. Our HIPAA-compliant patient texting and engagement platform integrates with Oracle Health to automate post-appointment review requests, distribute them across email, SMS, and patient portal channels, and feed responses back into a single multi-location dashboard.

The case study above is not a one-off. Curogram has helped multi-location practices generate 1,000+ new five-star reviews in 90 days by combining the right timing, the right messaging, and the right compliance guardrails.

Front desk teams stop chasing reviews manually. Directors stop guessing which sites need attention. Marketing stops fighting reputation gaps with paid spend.

For COOs, CIOs, and clinical operations leaders, the practical wins show up fast. Faster response times. Cleaner sentiment data. Stronger ratings across every site. Better new-patient conversion.

And a reputation system that scales as your network grows, without adding administrative load.

Schedule a demo with Curogram to see exactly how this works for your network. We will walk you through a reputation-specific dashboard tour, show how multi-location monitoring and review automation perform inside an Oracle Health environment, and run a baseline review of your current review volume, response time, and sentiment trends.

HIPAA compliance starts with the Minimum Necessary Standard. Do not include or reference patient names, medical conditions, appointment dates, or clinical details in public responses. Phrase responses generally: "We appreciate you sharing feedback about your recent visit," not "We're glad your knee surgery recovery is progressing well."

The industry standard is one automated review request per patient per calendar year. A patient who visits 3 to 4 times yearly should still receive only one automated request, ideally tied to their most successful visit. Multi-visit patients should be spaced at least 6 to 9 months apart.

Never respond with public anger or accusations. That amplifies the review and invites more. Instead, take three steps. Flag the review to Google with documentation of why it violates platform policies. Respond briefly and calmly: "We appreciate your feedback. If you'd like to discuss your experience, please contact our patient relations team at [phone]."

Look at four numbers. Review velocity, meaning new reviews per week per location, tells you whether your engine is still running. Response time, the average hours between review and your reply, predicts how often you convert negatives to positives. Sentiment themes, surfaced through AI analysis, point to your top operational issues. Patient source attribution shows what percentage of new patients cite reviews as their discovery source, tying reputation to revenue.

Most multi-location networks see measurable lift within 30 to 45 days of full implementation. Review volume typically rises first, often doubling or tripling in the first month once automated post-visit requests are running. Star ratings move next, usually shifting by 0.2 to 0.4 points within 60 to 90 days as new five-star reviews dilute older negative ones.

💡 For large NextGen Enterprise networks, Google reviews remain untapped. Neither NextGen nor Luma sends automated post-visit review texts or...

💡 RamSoft imaging center reputation management addresses a critical blind spot in radiology's most advanced clinical ecosystem. RamSoft delivers...

💡 Cloud 9 orthodontic review management powered by Curogram's Review Automation Dashboard automatically sends Google review requests to patients...